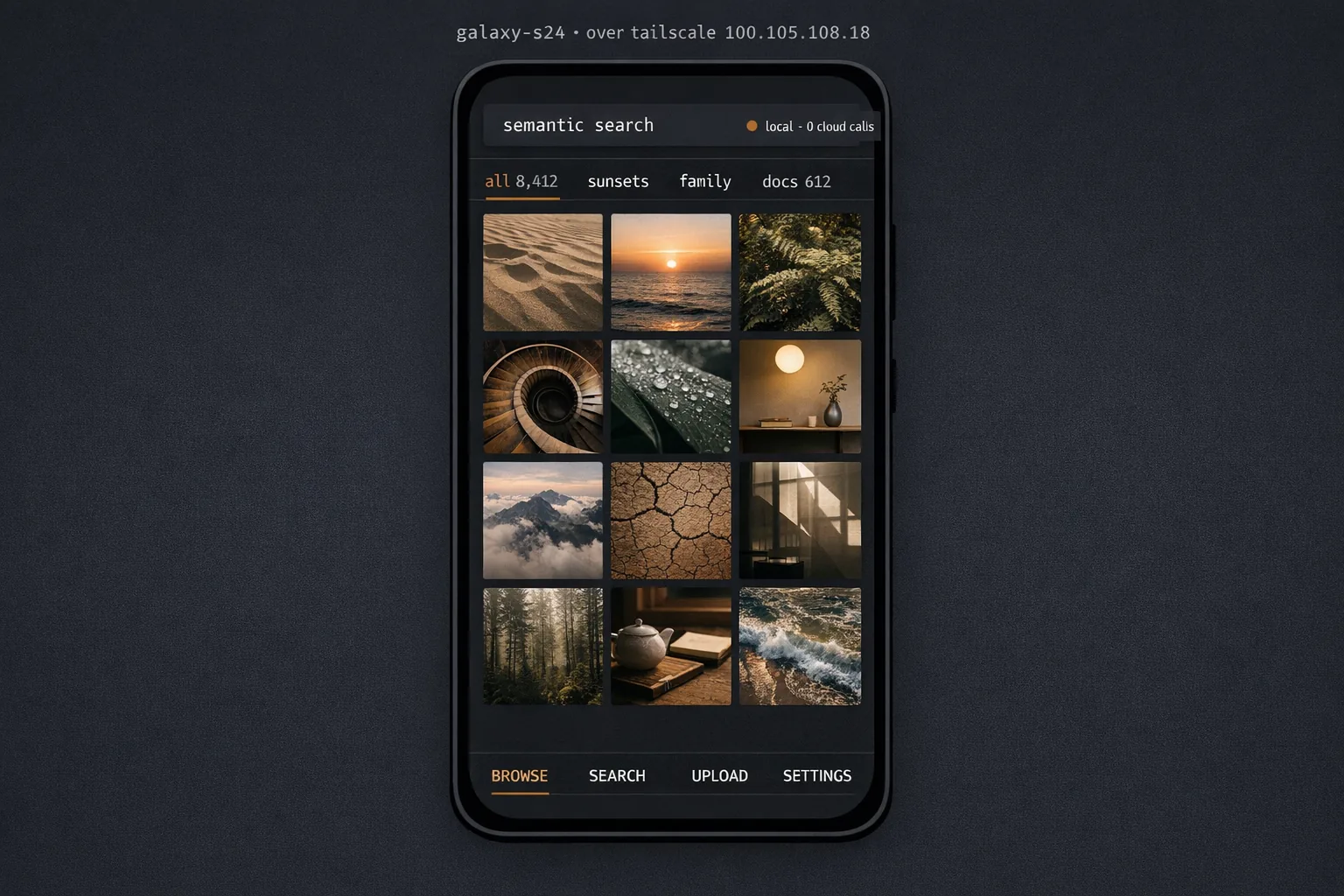

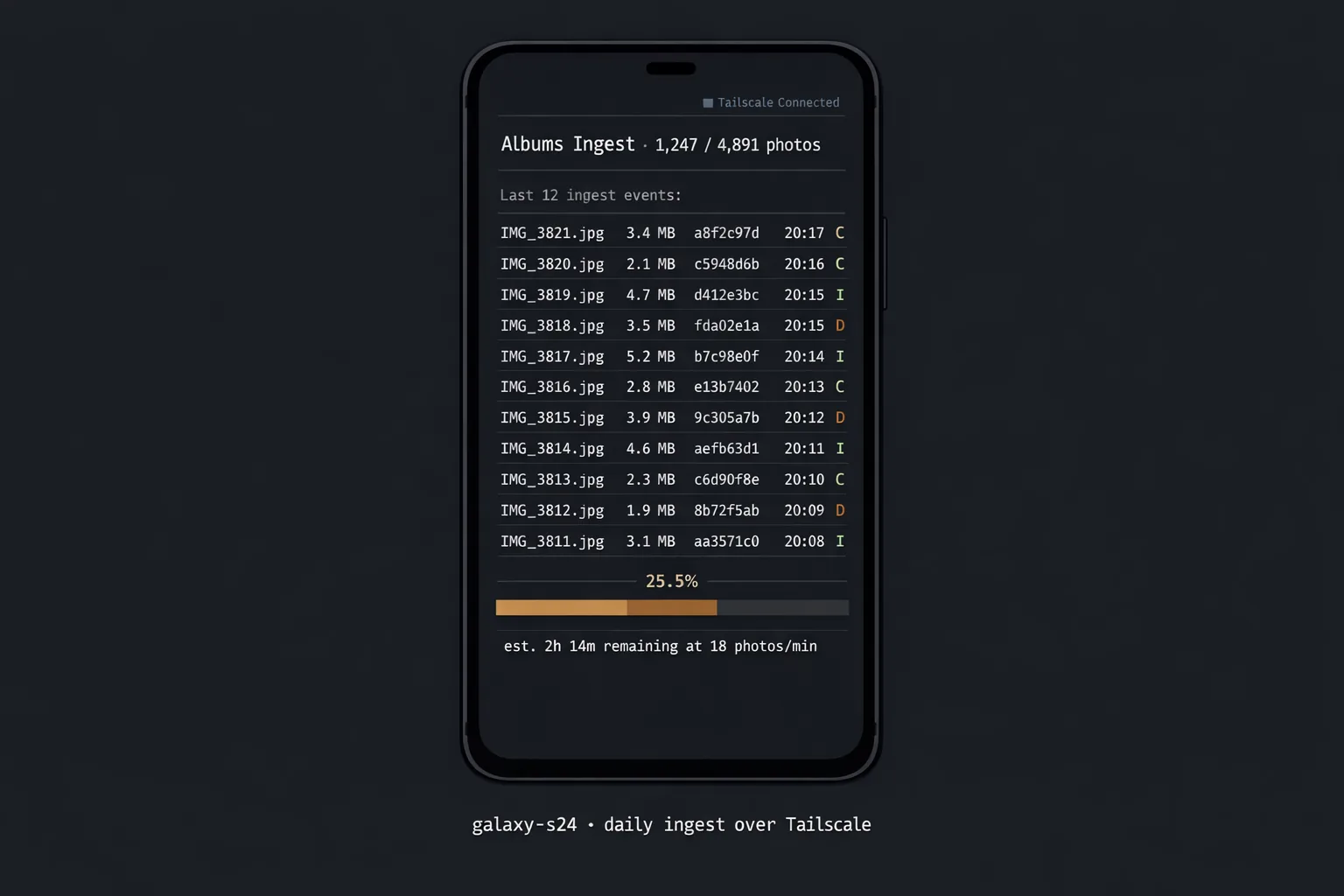

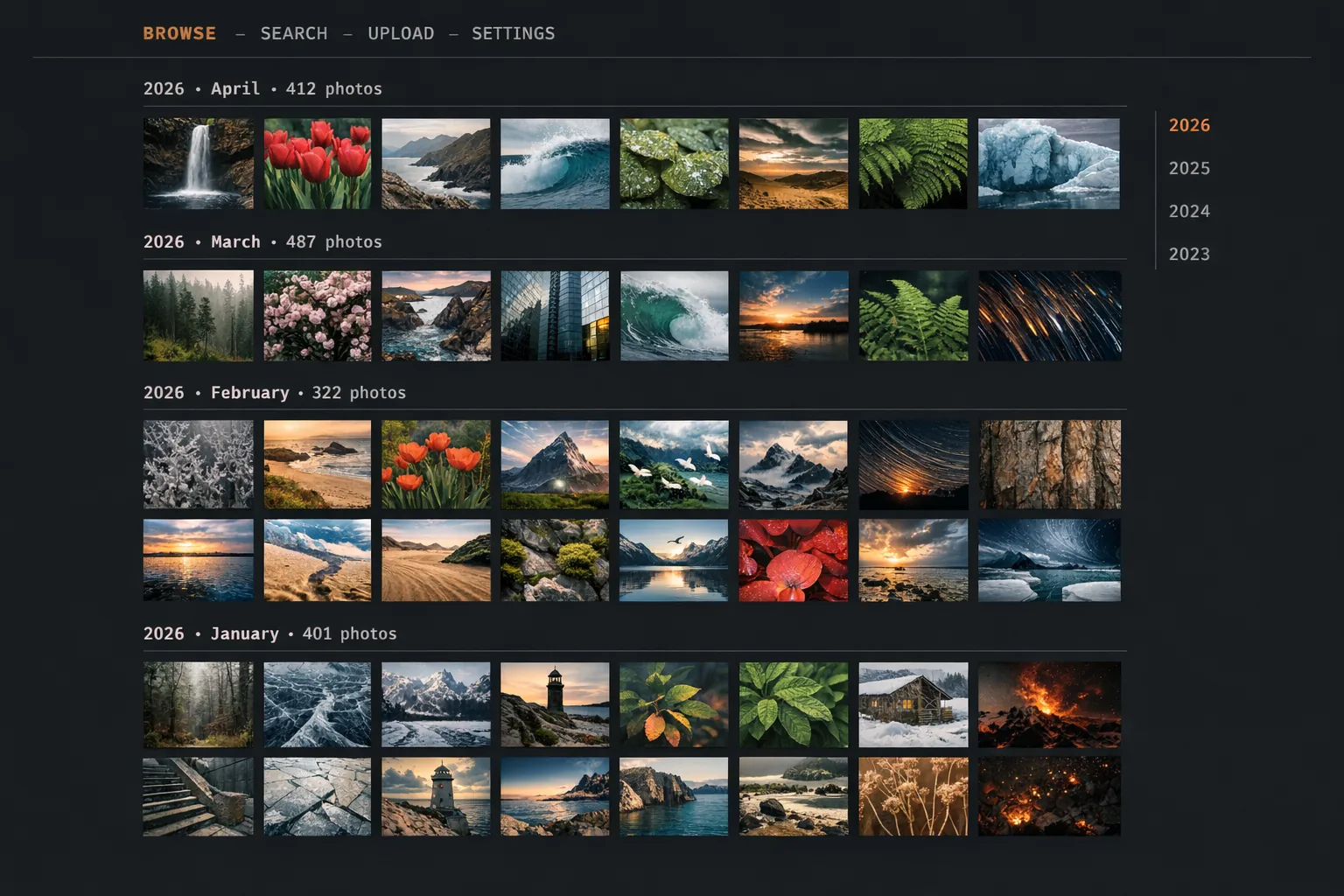

self-hosted · daily ingest from a Galaxy S24

Albums

- Built for:

- People who want to delete their Google Photos and Google Drive — not migrate them, delete them — and keep everything on their own hardware with their own indexes.

- Not built for:

- Family-sharing or multi-user collaboration. Albums is single-user data sovereignty, full stop.

Photos that matter shouldn’t be hostages to a subscription, a scan-your-face-for-the-algorithm policy change, or a quarterly pricing rebrand. Albums is a self-hosted personal cloud — auto-ingest from the phone, full-text and semantic search, AI captioning, all running on hardware I already own.

The problem

Google Photos is excellent until it isn’t — until the next pricing change, the next policy update, the next time the “memories” feature surfaces something I don’t want surfaced. Drive is the same, with the added detail that I don’t actually know what its model trains on. Both are convenient because they’re cloud, and the cloud is convenient because it’s also someone else’s.

Albums replaces that pair with a small box in my closet. The phone uploads to it over Tailscale, the box runs the indexes, the mobile app browses everything, and I keep the originals — and the captions, and the embeddings, and the audit log of what was ingested when.

Decisions

kept

Tailscale, not a public IP. The whole reason I’m self-hosting is to take this off the open internet; punching a hole in my router would defeat that. Tailscale gives the phone a stable address into the LAN with zero exposure.

kept

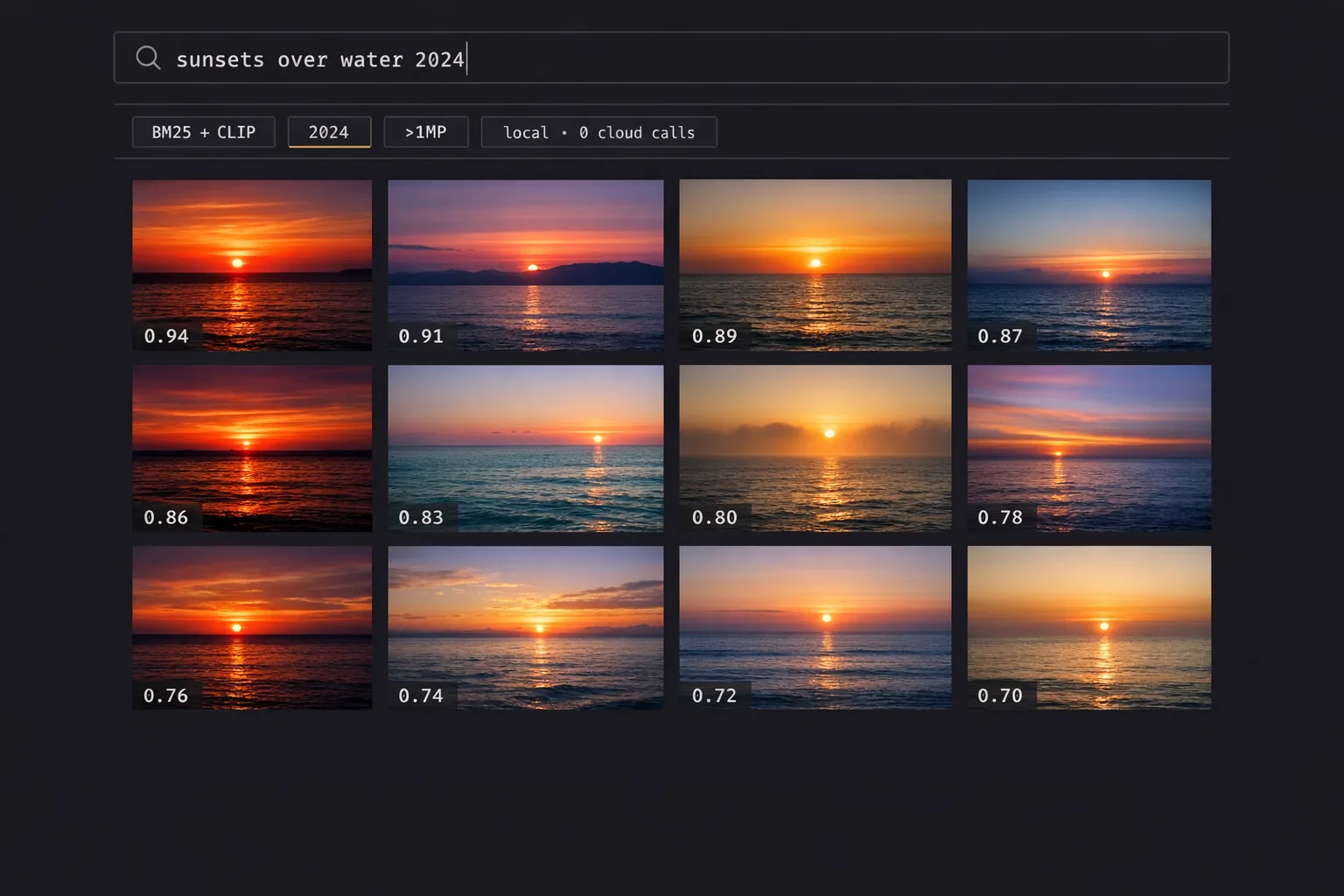

CLIP for semantic search, BM25 for text. Two indexes, two strengths: CLIP nails “photos with sunsets,” BM25 nails “PDFs about that warranty letter.” Hybrid retrieval beats either alone.

refused

A cloud-hosted compute fallback for the AI captioning. The whole premise is that nothing leaves the box. If the box can’t caption fast enough, the answer is a faster box, not a remote API.

System

| Layer | Implementation | Purpose |

|---|---|---|

| Backend | FastAPI · Python 3.13 | Ingest · indexes · search · audit |

| Storage | SQLite + flat-file | Originals on disk, indexes in SQLite |

| Search | CLIP + BM25 | Hybrid: semantic + lexical |

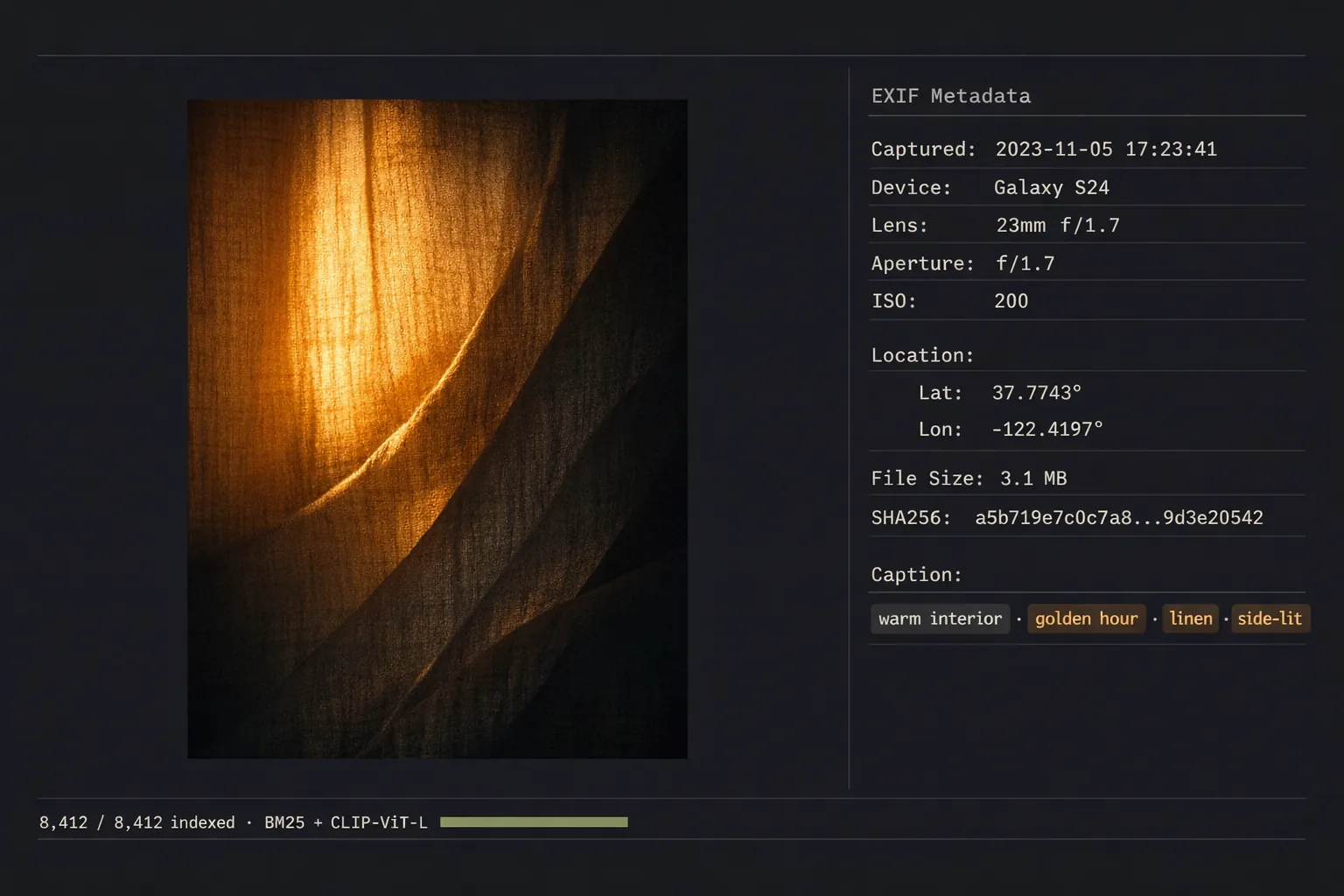

| Caption | Local VLM | Captions and tags applied at ingest |

| Network | Tailscale | Phone ↔ box without an open port |

| Mobile | React Native | Browse · search · upload, single-user |

# Single-tenant by design — phone authenticates via Tailscale

# device identity, not a token in a database. Caption + embed

# happen in-process at ingest; nothing is queued for the cloud.

@router.post("/ingest", dependencies=[Depends(tailscale_only)])

async def ingest(file: UploadFile, captured_at: datetime = Form(...)):

sha = await stream_to_disk(file, ORIGINALS_DIR)

if exists(sha):

return {"sha": sha, "status": "duplicate"}

exif = read_exif(ORIGINALS_DIR / sha)

caption = await caption_local(ORIGINALS_DIR / sha) # local VLM

clip_vec = embed_clip(ORIGINALS_DIR / sha) # 512-d

bm25_doc = caption + " " + (exif.get("description") or "")

db.insert(Asset(

sha=sha, captured_at=captured_at, exif=exif,

caption=caption, clip=clip_vec, bm25=bm25_doc,

))

return {"sha": sha, "status": "ingested", "caption": caption}

{

"sha": "9c4a83e2f7…",

"captured_at": "<iso8601-from-exif>",

"exif": {

"Make": "samsung",

"Model": "SM-S921U",

"GPSLatitude": 45.7341,

"GPSLongitude": -122.6741

},

"caption": "Two children running on a wet beach at golden hour, evergreen forest in the background.",

"clip_dims": 512,

"bm25_terms": 23,

"indexed_at": "<iso8601-utc>",

"_origin": "tailscale://galaxy-s24"

}

What’s next

The Google Photos / Drive importer is on the next milestone — the goal is to pull everything down once, verify it landed, and then delete from Google. Albums is the destination; the importer is the bridge that lets the destination actually replace what came before.

Acknowledgments

Albums stands on FastAPI, SQLite, CLIP from OpenAI, the BM25 implementations in rank-bm25 and Tantivy, Tailscale, and the long lineage of self-hosting projects (Nextcloud, Immich, PhotoPrism) that made the case for owning your photos before I needed to make it for myself.